Self-hosted AI breaks. That’s fine. So does firmware. So do hashboards. So does the PSU you bought off a sketchy Alibaba link in 2021. If you’ve spent any time running your own mining hardware, you’ve already built the troubleshooting muscle: read the logs, isolate the variable, replace what’s broken, move on. This post just translates the common AI failure modes into vocabulary you already use.

Every section below follows the same shape as an error code entry: Symptom, Cause, Fix. Scroll to yours. No ceremony.

Before you dive into a specific issue, run this diagnostic sweep first. Nine times out of ten the answer is already on screen:

ollama list # what models are installed

ollama ps # what's loaded, CPU or GPU?

nvidia-smi # NVIDIA: driver + VRAM + processes

rocm-smi # AMD equivalent

# macOS: Activity Monitor → Window → GPU History

docker ps # Open WebUI or other containers up?

journalctl -u ollama -n 50 # systemd users: last 50 log lines

The ollama ps PROCESSOR column alone tells you whether your GPU is being used. If it says 100% CPU, skip to H2 2 or H2 3 depending on your hardware. Everything else is detail.

1. “ollama: command not found” after install

Symptom: You ran the official install script, it printed success, and ollama still isn’t a recognized command in your shell.

Cause: Your shell’s PATH didn’t pick up /usr/local/bin in the current session, or the install script hit a silent failure partway through — usually an unsupported glibc on an older distro, or a write error under /usr/local/bin.

Fix:

– Open a new terminal, or run source ~/.bashrc (or ~/.zshrc). Nine out of ten cases, that’s it.

– Confirm the binary landed: ls -la /usr/local/bin/ollama. If it’s missing, the install failed.

– Re-run with logging so you can see what broke: curl -fsSL https://ollama.com/install.sh | sh 2>&1 | tee install.log, then read install.log top to bottom.

– If you’re on an older LTS with glibc <2.31, upgrade the distro or build llama.cpp from source instead. Ollama’s static builds target reasonably modern libc.

Credit where it’s due: the Ollama team packages llama.cpp and an install UX that Just Works on ~95% of systems. When it doesn’t, it’s almost always a PATH or glibc issue, not Ollama’s fault.

2. GPU not detected (NVIDIA)

Symptom: Ollama runs, models load, but ollama ps shows 100% CPU in the PROCESSOR column. Tokens crawl out at 2–4 tok/s on an 8B model that should be doing 60+.

Cause: One of four things, in order of frequency:

- NVIDIA driver isn’t installed (or is the wrong version).

- CUDA runtime mismatch — Ollama’s bundled llama.cpp wants a newer CUDA than your driver exposes.

/dev/nvidia*device nodes aren’t present or readable by theollamauser.- You’re on Docker or WSL2 and the GPU passthrough plumbing isn’t configured.

Fix:

– First, nvidia-smi from your Hashcenter shell. If that fails, no amount of Ollama config will help — fix the driver before going further. sudo apt install nvidia-driver-570 (or whatever current is) on Debian/Ubuntu, dnf install nvidia-driver on RHEL-family.

– Read Ollama’s CUDA-detection logs directly: journalctl -u ollama | grep -i cuda. It will tell you plainly “compute capability X detected” or “no CUDA devices found”.

– Docker: add --gpus=all to your docker run invocation, and install the NVIDIA Container Toolkit first (nvidia-ctk from NVIDIA’s apt repo). Without the toolkit, --gpus=all is silently ignored.

– WSL2: the driver must be installed in Windows, not inside WSL. Then nvidia-smi inside WSL should work. If it does, Ollama will find the GPU.

– Device nodes: ls -la /dev/nvidia*. If they don’t exist after driver install, sudo nvidia-modprobe -c0 -u or reboot.

Credit: NVIDIA for CUDA and for making nvidia-smi the most useful diagnostic tool in any GPU stack.

3. GPU not detected (AMD / ROCm)

Symptom: Same as H2 2, on AMD hardware. ollama ps says CPU, tokens are slow.

Cause:

1. ROCm version too old — Ollama’s llama.cpp needs ROCm 5.7+.

2. Your GPU isn’t on AMD’s ROCm supported list (check before buying, not after).

3. The ollama user can’t read /dev/kfd or /dev/dri/renderD128.

4. Older GPU that needs the HSA_OVERRIDE_GFX_VERSION escape hatch.

Fix:

– rocm-smi should list your card. If it doesn’t, your ROCm install is broken — reinstall from AMD’s repo, not the distro package.

– Check version: rocminfo | grep -i version or apt show rocm-libs. Below 5.7, upgrade.

– Permissions: sudo usermod -aG render,video ollama. Restart the ollama service afterward (systemctl restart ollama).

– Supported list: RDNA3 flagships (7900 XTX, 7900 XT, 7900 GRE) work out of the box. RDNA2 (6800/6900 XT) mostly works. Older Vega/RDNA1 often needs HSA_OVERRIDE_GFX_VERSION=10.3.0 (or similar) set in the ollama service environment.

– If you’re running an override, put it in /etc/systemd/system/ollama.service.d/override.conf:

[Service]

Environment="HSA_OVERRIDE_GFX_VERSION=10.3.0"

Then systemctl daemon-reload && systemctl restart ollama.

Credit: AMD for ROCm, and the llama.cpp team for shipping ROCm support alongside CUDA — it’s not trivial.

4. Out of memory (OOM) on model load

Symptom: Model downloads fine, then ollama run <model> prints something like Error: llama runner process has terminated: exit status 2 or failed to load model: out of memory.

Cause: The model’s weights plus KV cache don’t fit in your VRAM. Usually it’s one of:

- Model is simply too big for your card (70B Q4 on a 16 GB GPU: no).

- Context window is set huge (128K context on Llama 3.1 70B eats ~16 GB of KV cache on top of the weights).

- Another process is holding VRAM — a stray ComfyUI instance, a forgotten browser tab with a WebGL demo, your desktop compositor.

Fix:

– Smaller quantization is the first lever. Go Q8 → Q4 → Q3 before you panic. See Quantization Explained for which tradeoffs matter.

– Reduce context: ollama run llama3.1 --num_ctx 2048. Default is often 4096 or 8192 depending on the model’s Modelfile.

– Free VRAM: nvidia-smi shows the process list at the bottom. Identify the squatter, kill it.

– Drop to a smaller variant: Llama 3.1 70B → Llama 3.1 8B; Gemma 3 27B → Gemma 3 12B → Gemma 3 4B. The smaller ones are shockingly good in 2026.

– Mixed CPU+GPU offload: set num_gpu to a number less than total layers in a Modelfile. Ollama puts that many layers on GPU and spills the rest to system RAM. Slower than pure GPU, still way faster than pure CPU.

A rule of thumb: VRAM needed ≈ (model size in GB) + (0.1 × context in K tokens × model param billions). Imprecise, but gets you in the right neighborhood.

5. Slow tokens / inference is CPU-bound

Symptom: Model loaded, no OOM, but tok/s is stuck under 5 on what should be an easy 8B model.

Cause:

– Offloaded layer count is 0 — Ollama decided nothing fit on GPU and went full CPU.

– GPU is detected but num_gpu got set to 0 somewhere (Modelfile, env var, CLI flag).

– Thermal throttling — a buried card in a hot rack will downclock hard.

– PCIe lane starvation — x1 riser left over from mining-era hardware.

Fix:

– ollama ps first. If PROCESSOR says CPU, go back to H2 2 or H2 3.

– Force max offload: create a Modelfile with PARAMETER num_gpu 999. Ollama will offload every layer that fits. Build with ollama create mymodel -f Modelfile.

– Watch during inference: nvidia-smi dmon -s put in another terminal. You want to see memory in use AND utilization at 80%+. If memory is used but utilization is idle, the model isn’t actually running on GPU — it’s loaded and being read back to CPU (something’s misconfigured).

– Thermal check: nvidia-smi dmon -s t during a heavy query. If the card hits thermal limits (83°C+) and clocks drop, your Hashcenter airflow needs work. This is familiar territory — it’s the same reason a Bitmain S19 derates when the inlet air hits 40°C.

– PCIe check: nvidia-smi -q | grep -i "Link Width". x16 or x8 is fine. x1 will bottleneck large-model load times and large-context inference.

6. Model not found / “manifest not found”

Symptom: ollama pull llama3.1:70b-instruct-q4_K_M returns Error: manifest not found.

Cause: Typo in the tag, the tag was renamed upstream, or your network can’t reach registry.ollama.ai.

Fix:

– Browse ollama.com/library and copy the exact tag. Don’t guess quant suffixes — they change per model.

– Test network: curl -v https://registry.ollama.ai/ should succeed. If it hangs, check your firewall, proxy, or DNS. Corporate and ISP-level DNS filtering occasionally blocks it.

– If you’re behind a proxy, set HTTPS_PROXY in the ollama service environment.

7. Ollama service won’t start

Symptom: systemctl status ollama shows failed or activating (auto-restart) in a loop. Port 11434 isn’t listening (ss -tulpn | grep 11434 returns nothing).

Cause:

– Port conflict — something else grabbed 11434.

– Corrupted model storage — a pull was killed mid-download and left a bad blob.

– Permissions on the ollama data directory.

Fix:

– journalctl -u ollama -n 100 is your best friend here. Read the actual error before doing anything else.

– Port check: ss -tulpn | grep 11434. If something else is there, stop it or change Ollama’s port (OLLAMA_HOST=0.0.0.0:11435 in the service environment).

– Storage check: ls -la ~/.ollama/ or /usr/share/ollama/.ollama/ (systemd install puts it under the ollama user’s home). Ownership should be ollama:ollama. If it’s root-owned from a manual ollama pull as root, fix it: chown -R ollama:ollama /usr/share/ollama/.ollama/.

– Corrupted blob: if the logs mention a specific blob hash failing integrity, nuke the blobs and re-pull: rm -rf /usr/share/ollama/.ollama/models/blobs && systemctl restart ollama && ollama pull <model>.

8. Multi-GPU: only one card used

Symptom: You’ve got a 4× 3090 rig in your Hashcenter but nvidia-smi during inference shows only GPU 0 is active. The other three sit idle with ~100 MB used.

Cause: Modern Ollama handles multi-GPU automatically, but only when the model is big enough to need the spill. An 8B Q4 model fits comfortably on one 3090 (24 GB) and Ollama won’t split it across cards for no reason. This is working as intended — you just need a bigger model or a direct llama.cpp setup to flex the rig.

Fix:

– Update Ollama first — multi-GPU support matured significantly through 2024–2025 releases. ollama --version, then curl -fsSL https://ollama.com/install.sh | sh to upgrade.

– Load a model that actually needs multiple cards: Llama 3.1 70B Q4 (~40 GB) spans 2× 3090s cleanly. A 405B quant needs all four.

– For fine-grained control, use llama.cpp directly: llama-server -m model.gguf --tensor-split 1,1,1,1 -ngl 99. The --tensor-split ratios let you balance across cards of different sizes.

– CUDA_VISIBLE_DEVICES=0,1,2,3 (or a subset) scopes what each process can see. Useful when you want one model on GPUs 0–1 and another on 2–3.

– For production serving with queued concurrent requests, vLLM or SGLang outperform Ollama and llama.cpp — worth the setup cost if you’re running a shared endpoint.

Credit: the llama.cpp maintainers for the tensor-split implementation. Multi-GPU inference across heterogeneous cards is genuinely hard, and they shipped it.

9. Open WebUI can’t see Ollama

Symptom: Open WebUI container is running, you can log in, but the model dropdown says “No models available” or the chat just errors out.

Cause: OWUI can’t reach the Ollama backend. Networking between container and host is the most common culprit, followed by Ollama binding to localhost-only when OWUI expects to reach it over the network.

Fix:

– If Ollama is on the same machine as Docker: add --add-host=host.docker.internal:host-gateway to your docker run. On Docker Desktop (Mac/Windows) this works out of the box. On native Linux Docker you need the flag.

– Set the env var inside the container: -e OLLAMA_BASE_URL=http://host.docker.internal:11434.

– If Ollama is on a different machine entirely: OLLAMA_BASE_URL=http://192.168.1.50:11434 (or whatever the LAN IP is), and make sure Ollama binds to all interfaces: Environment="OLLAMA_HOST=0.0.0.0" in the ollama systemd service override. Restart ollama afterward.

– Confirm from the OWUI container’s perspective: docker exec -it open-webui curl -s http://host.docker.internal:11434/api/tags. If that returns a JSON list of models, OWUI will see them. If it hangs or 404s, the network path is broken.

– Full walkthrough in Open WebUI: The ChatGPT Experience.

Credit: the Open WebUI team for building the best self-hosted LLM UI in the ecosystem. Their troubleshooting docs are also excellent.

10. Context truncation / model “forgets” earlier in chat

Symptom: Long conversation, and somewhere past turn 10 the model starts ignoring the beginning. Facts you established early get forgotten. The model answers as if the first half of the chat never happened.

Cause: You’ve exceeded the model’s context window. Ollama silently truncates oldest tokens first — no warning, no error, just quiet amnesia.

Fix:

– Check the model’s actual context limit. Llama 3.1 and 3.3: 128K. Gemma 2: 8K. Gemma 3: 128K. Mistral 7B v0.3: 32K. Qwen 2.5: 32K native, 128K with YaRN. The AI Models library has the specs per model.

– Set num_ctx explicitly if you want more than the Ollama default (often 4096, sometimes 8192). In a Modelfile:

FROM llama3.1:8b

PARAMETER num_ctx 32768

Then ollama create llama3.1-32k -f Modelfile.

– VRAM reality check: large contexts are expensive. 128K context on a 70B Q4 model adds roughly 16 GB to the VRAM footprint on top of the weights. Most of what you save by running Q4 gets eaten by a full context window.

– If you genuinely need long-context work, rent a cloud GPU for that one job or build a RAG pipeline that retrieves only the relevant chunks at each turn.

11. Docker / WSL permission errors on pull

Symptom: ollama pull fails with “permission denied” or “read-only file system” inside a container.

Cause: You bind-mounted a host directory into the container, and the UID/GID inside the container doesn’t own it on the host. Classic Docker volume permission trap.

Fix:

– Use a named Docker volume, not a host bind mount: -v ollama:/root/.ollama. Docker manages ownership and you don’t have to think about it.

– If you need a host bind mount (for backup reasons), match the UID: docker run --user $(id -u):$(id -g) ... and chown the host directory to match.

– WSL2: if you’re running Ollama inside a Docker container inside WSL, consider just running Ollama natively in WSL. Fewer layers, fewer permission games. The native Linux install script works fine under WSL2.

12. ComfyUI / Stable Diffusion specific issues

Symptom: ComfyUI throws CUDA out of memory when generating at 1024×1024 on SDXL or FLUX, even on a 12–16 GB card that should be enough.

Cause: Diffusion models peak VRAM during the middle layers when attention heads materialize full-resolution tensors. The average VRAM during generation is modest; the peak is what breaks things. FLUX.1 dev at fp16 is especially brutal.

Fix:

– Launch flags: --lowvram for 8 GB or less, --medvram for 12 GB. ComfyUI swaps components in/out of VRAM on demand — slower, but it fits.

– Reduce resolution: generate at 768×768 or even 512×512, then upscale with a dedicated upscaler node. Final quality is often better anyway, and you skip the OOM.

– For FLUX.1 dev specifically: use the fp8 variant, not fp16. Roughly half the VRAM, imperceptible quality loss for most workflows.

– Close everything else. Browser tabs with WebGL, a running Ollama model, your desktop compositor — all of it takes VRAM.

– Baseline setup: ComfyUI for Plebs (coming soon).

Credit: the ComfyUI team for building the most extensible diffusion UI in the ecosystem, and for the node-based design that makes workflows shareable and debuggable.

Closing: same skill, new vocabulary

If you’ve made it this far, you’ve probably noticed something: every category of failure above maps cleanly onto things you already know from running miners.

- GPU not detected = hashboard not initializing. Same root-cause tree: driver/firmware, permissions, physical detection.

- OOM on load = PSU can’t deliver enough watts at startup. Pick a smaller load (smaller model / smaller quant) or a bigger supply (smaller context / bigger card).

- Slow tokens = underclocked miner. Thermal throttling, wrong config, bad PCIe link.

- Service won’t start = miner stuck in a boot loop. Read the logs, something will be obvious.

- Multi-GPU: only one card used = only one hashboard hashing in a three-board unit. Is the model big enough to even need all of them? Check the load before blaming the rig.

Troubleshooting is the same skill. AI has its own vocabulary for the same categories of failure: detection, memory, throughput, network, permissions. You already have the instincts. Now you’ve got the keywords.

When you’re stuck and this post didn’t cover it, the communities are active and responsive:

- github.com/ollama/ollama/issues — search before you post; the answer is probably already there.

- r/LocalLLaMA on Reddit — the single best real-time signal on what’s working and what’s broken in self-hosted AI.

- Open WebUI Discord — fast triage for OWUI-specific weirdness.

- HuggingFace model pages — always check the discussions tab for a model; known issues often live there.

From here, if you’re still assembling the stack, go back to the Pleb’s Guide to Self-Hosted AI for the big-picture map, or Install Ollama in 10 Minutes to rebuild clean. If you’re choosing where to run it, Used RTX 3090 for LLMs in 2026 and LM Studio vs Ollama vs llama.cpp cover hardware and runtime tradeoffs.

Sovereignty is a practice, not a product. Breaking, diagnosing, fixing — that’s the practice. See the Sovereign AI for Bitcoiners Manifesto for why this matters.

Further reading: The same pleb-grade infrastructure that runs local inference also runs a Bitcoin space heater. Many readers arrive from the mining side — see From S19 to Your First AI Hashcenter for the bridge.

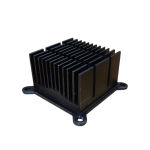

Bitaxe Heatsink — High-Performance Aluminum Cooler for Bitaxe & Nerdaxe Gamma / Supra / Ultra — Silent Operation & Stable Overclocking" width="80" height="80" loading="lazy" style="width:80px;height:80px;object-fit:contain;border-radius:6px;background:#1A1A1A;flex-shrink:0;">

Shop Heatsinks

Bitaxe Heatsink — High-Performance Aluminum Cooler for Bitaxe & Nerdaxe Gamma / Supra / Ultra — Silent Operation & Stable Overclocking" width="80" height="80" loading="lazy" style="width:80px;height:80px;object-fit:contain;border-radius:6px;background:#1A1A1A;flex-shrink:0;">

Shop Heatsinks